Google TPU 单独出售给云服务商,进入租赁市场

谷歌加速布局AI芯片领域,与英伟达展开直接竞争。据TheInformation报道,谷歌正积极接洽小型云服务商,促使其在数据中心部署谷歌TPU处理器。目前已与英国Fluidstack达成协议,并计划与其他英伟达芯片租赁商合作,包括为OpenAI服务的Crusoe和CoreWeave。谷歌为吸引合作伙伴提供资金支持,最高承诺32亿美元担保。其第六代Trillium TPU需求旺盛,专为大规模推理设计

According to The Information, citing sources, Google has recently approached smaller cloud providers that primarily lease NVIDIA chips, urging them to also host its AI processors in their data centers. As the report notes, the goal may be to encourage adoption of Google’s TPUs, which might place Google in more direct competition with NVIDIA.

据The Information援引消息人士报道,谷歌近期已接洽主要租赁英伟达芯片的小型云服务提供商,敦促其数据中心同时托管谷歌的人工智能处理器。报道指出,此举可能旨在推广谷歌TPU芯片的应用,这或将使谷歌与英伟达形成更直接的竞争关系。

As the report points out, sources say Google has struck a deal with at least one cloud provider — London-based Fluidstack — to deploy its TPUs in a New York data center. Google’s efforts extend beyond Fluidstack. According to the report, it has reportedly also sought similar deals with other NVIDIA-focused providers, including Crusoe, which is building a data center full of NVIDIA chips for OpenAI, and CoreWeave, which leases NVIDIA hardware to Microsoft and has a contract to supply OpenAI.

如报告指出,消息人士透露谷歌已与至少一家云服务商达成协议——总部位于伦敦的Fluidstack——将在纽约数据中心部署其TPU处理器。谷歌的合作布局不仅限于Fluidstack。据披露,该公司还寻求与多家专注于英伟达设备的供应商达成类似协议,包括为OpenAI建造满载英伟达芯片数据中心的Crusoe,以及向微软出租英伟达硬件并与OpenAI签订供应合约的CoreWeave。

The companies Google is targeting are largely emerging cloud providers that rely heavily on NVIDIA chips, the report highlights. To win over these cloud providers, Google has reportedly offered Fluidstack incentives to aid its TPU expansion. If Fluidstack can’t cover the lease for its new New York data center, Google would act as a “backstop” with up to $3.2 billion, as the report notes.

报道指出,谷歌瞄准的主要是严重依赖英伟达芯片的新兴云服务提供商。为了争取这些云服务商,据报道谷歌已向Fluidstack提供激励措施以助其扩张TPU业务。若Fluidstack无法承担其纽约新数据中心的租赁费用,报道称谷歌将作为"最后担保人"提供高达32亿美元的资金支持。

Google’s TPU Strategy: From Internal Demand to External Growth

Google’s push to advance its own AI chip has been in motion for some time. As the report highlights, sources say the company has considered ramping up the TPU business to boost revenue and reduce its dependence on NVIDIA chips.

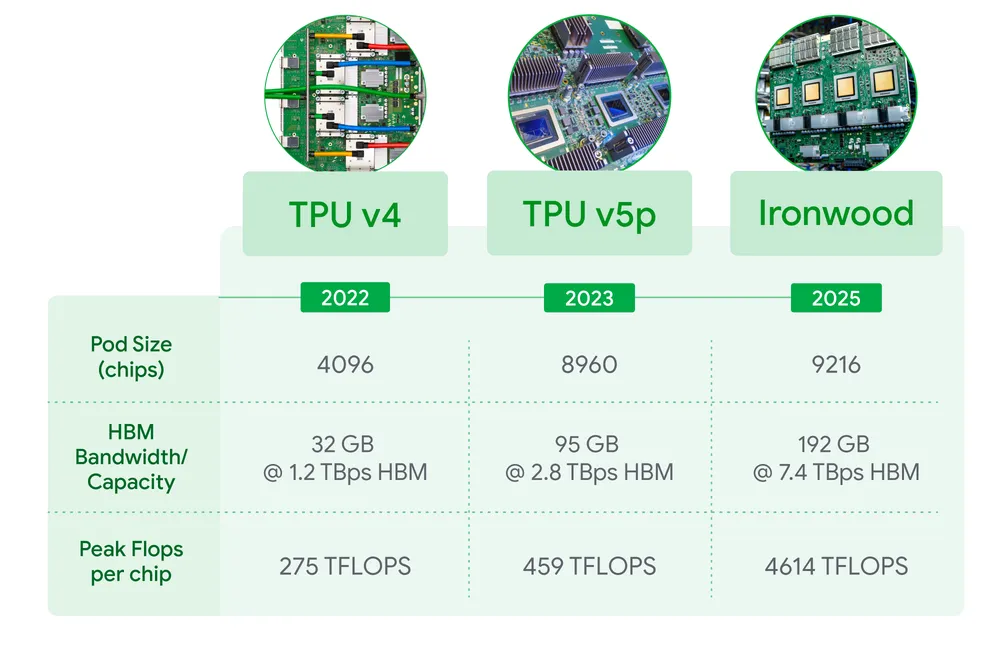

Its TPU and AI business is on the rise. Morningstar, citing analysts, estimates that Google’s TPU operations and its DeepMind AI research arm together could be valued at around $900 billion. As the report indicates, the sixth-generation Trillium TPUs, released in December 2024, are in high demand. Demand is also expected to increase for the seventh-generation Ironwood TPU, the company’s first designed for large-scale inference, the report adds.

As The Information points out, Google primarily uses TPUs for its own AI projects, such as the Gemini models, with internal demand soaring in recent years. The company has also long rented TPUs to outside firms — including Apple and Midjourney via Google Cloud, according to the report.

谷歌推进自研AI芯片的计划已酝酿多时。报道指出,知情人士透露该公司正考虑扩大TPU业务以增加营收,降低对英伟达芯片的依赖。

其TPU与AI业务正呈现上升态势。晨星公司援引分析师预估,谷歌TPU业务与旗下DeepMind人工智能研究部门合计估值或达9000亿美元。报道称,2024年12月发布的第六代Trillium TPU需求旺盛,而首款专为大规模推理设计的第七代Ironwood TPU预计也将迎来需求增长。

据The Information披露,谷歌主要将TPU用于内部AI项目(如Gemini模型),近年来内部需求激增。报道补充称,该公司还长期通过谷歌云向苹果、Midjourney等外部企业出租TPU算力。

https://www.theinformation.com/articles/google-ramps-ai-chip-competition-nvidia

https://blog.google/products/google-cloud/ironwood-tpu-age-of-inference/

Today at Google Cloud Next 25, we’re introducing Ironwood, our seventh-generation Tensor Processing Unit (TPU) — our most performant and scalable custom AI accelerator to date, and the first designed specifically for inference. For more than a decade, TPUs have powered Google’s most demanding AI training and serving workloads, and have enabled our Cloud customers to do the same. Ironwood is our most powerful, capable and energy efficient TPU yet. And it's purpose-built to power thinking, inferential AI models at scale.

Ironwood represents a significant shift in the development of AI and the infrastructure that powers its progress. It’s a move from responsive AI models that provide real-time information for people to interpret, to models that provide the proactive generation of insights and interpretation. This is what we call the “age of inference” where AI agents will proactively retrieve and generate data to collaboratively deliver insights and answers, not just data.

Ironwood is built to support this next phase of generative AI and its tremendous computational and communication requirements. It scales up to 9,216 liquid cooled chips linked with breakthrough Inter-Chip Interconnect (ICI) networking spanning nearly 10 MW. It is one of several new components of Google Cloud AI Hypercomputer architecture, which optimizes hardware and software together for the most demanding AI workloads. With Ironwood, developers can also leverage Google’s own Pathways software stack to reliably and easily harness the combined computing power of tens of thousands of Ironwood TPUs.

Ironwood solves the AI demands of tomorrow

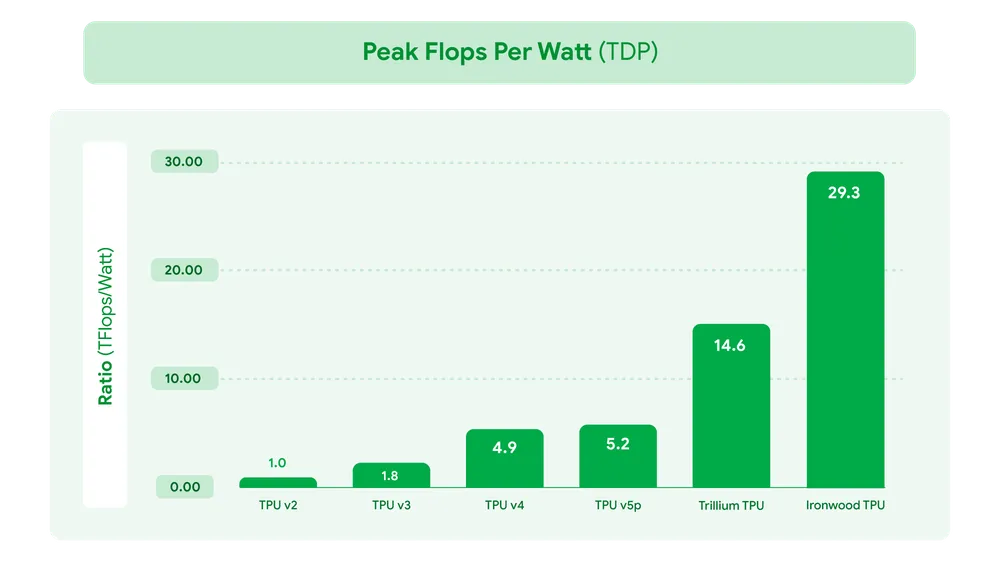

Ironwood represents a unique breakthrough in the age of inference with increased computation power, memory capacity, ICI networking advancements and reliability. These breakthroughs, coupled with a nearly 2x improvement in power efficiency, mean that our most demanding customers can take on training and serving workloads with the highest performance and lowest latency, all while meeting the exponential rise in computing demand. Leading thinking models like Gemini 2.5 and the Nobel Prize winning AlphaFold all run on TPUs today, and with Ironwood we can’t wait to see what AI breakthroughs are sparked by our own developers and Google Cloud customers when it becomes available later this year.

铁木(Ironwood)代表了推理时代的一次独特突破,其计算能力、内存容量、芯片间互联(ICI)网络技术及可靠性均显著提升。这些突破性进展,加上近两倍的能效提升,意味着我们最严苛的客户能够以最高性能和最低延迟处理训练与推理任务,同时满足指数级增长的计算需求。当前,诸如Gemini 2.5和诺贝尔奖得主AlphaFold等前沿思维模型均运行在TPU上。随着铁木平台将于今年晚些时候面世,我们迫不及待想见证自家开发者与谷歌云客户将由此激发出怎样的人工智能新突破。

更多推荐

已为社区贡献4条内容

已为社区贡献4条内容

所有评论(0)